Internal iterators like foreach tend to do very well in micro-benchmarks on the JVM. In fact, they often do as well as the equivalent manual while or for loop. There is a catch, however, and it’s easy to miss it in micro-benchmarks.

A few months ago, David MacIver forwarded an email from Martin Odersky to the scala-internals mailing list about this. The problem as described by Martin:

“The reason seems to be that the foreach itself is not inlined, so the call to the closure becomes megamorphic and therefore slow.”

I was curious about the benchmarks used to measure this and Tiark Rompf (who had performed the measurements) provided the source code. I said I’d try to take a look the next weekend and I did it… more than 3 months later. Well, better late than never. ;)

There are 3 benchmarks:

- Benchmark A: traverse a collection with N elements

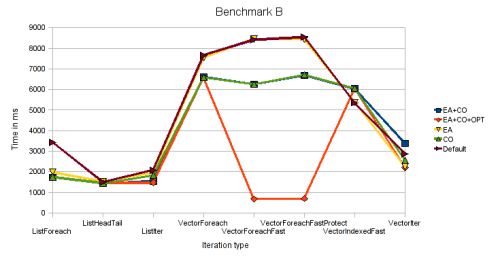

- Benchmark B: traverse a collection with N elements, inside the loop/closure traverse another collection with N2 elements 3 times

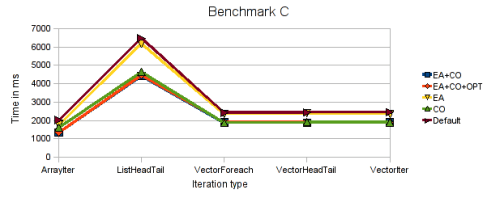

- Benchmark C: build a collection (front to back) of N elements

Various approaches are used for each benchmark with various collections types. One weakness of the these benchmarks is that they don’t include a verification mechanism to ensure that all benchmarks produce the same result. However, they include code that tries to prevent the JIT from performing unfair optimisations (e.g. print something if an element in the collection matches a certain condition).

The original results produced by Tiark can be found here.

I added a few benchmarks that use plain arrays (RawArrayIndexed, RawArrayForeach, RawArrayForeachMega), made minor changes to the scripts and pushed the code to a GitHub repository. I left the rest of the Scala code as it was to make it easy to compare with the original results and ran the benchmark with various JVM settings to see what effect they would have. All the tests shared the following:

- Dual quad-core Xeon E5462 2.80GHz

- 14 GB RAM

- Fedora 11

- Scala 2.8.0.r19261

- Revision 59521431f5c118b73e35b0b396e3efd6aecec3dd of project

- 64-bit JDK 6 Update 18 early access b03

- JVM base settings: -Xms1G -Xmx1G -XX:+UseParallelGC -XX:+UseParallelOldGC

JDK 6 Update 18 is scheduled to be released on Q4, 2009 and it includes HotSpot 16. Even though JDK 6 Update 14 (HotSpot 14) introduced compressed references and scalar replacement, HotSpot 16 includes improved compressed references and many crucial fixes to both features. According to my testing these features are now approaching production-level stability and the OpenJDK engineers seem to agree as they are both enabled by default in HotSpot 17 (which will eventually hit JDK6 too).

Interested in how these features would affect the performance in these benchmarks, I ran them with various combinations. I also added Scala’s compiler -optimise flag in some cases.

The original benchmark from Tiark used 3 collection types: array (java.util.ArrayList), list (scala.List, immutable single linked list) and vector (earlier version of immutable vector that has recently been added to Scala 2.8). I added JVM arrays and they are shown as “rawarray” in the charts. Finally, we get to the actual numbers.

There are some interesting data points here:

- Compressed references is a _huge_ win. RawArrayIndexed went from 500ms to 142ms and many of the vector operations were much faster.

- Escape analysis (which enables scalar replacement) doesn’t seem to have much of an effect.

- scalac -optimise doesn’t seem to have much of an effect.

- foreach is misleadingly fast in micro-benchmarks, but it’s easy to bring it down to earth. RawArrayForeach performs similarly to RawArrayIndexed, but RawArrayForeachMega is 10 times slower. The latter simply calls foreach with a few different anonymous functions during the collection creation phase causing the call site to become megamorphic. Once this happens, the only hope for good performance is that the foreach method gets inlined and it doesn’t seem to happen here. With this in mind, it seems like ticket 1338 (Optimize simple for loops) is a good idea.

Once again, compressed references are a large factor in some benchmarks (halving the time taken in some cases).

The new bit of information is that scalac -optimise causes a huge improvement in VectorForeachFast and VectorForeachFastProtect. This makes sense once one considers one of the findings from the previous benchmark. We said that inlining of foreach is of extreme importance once a call site is megamorphic and this is precisely what -optimise does in this case (and the JVM fails to do so at runtime otherwise). Sadly, -optimise cannot do this safely in many cases as it’s shown by the results for VectorForeach.

Once again, compressed references provide a nice boost. Seems like this option is a winner in 64-bit JVMs (if you don’t need a heap larger than 32GB), it saves memory and gives better performance. The usual disclaimer applies though, you should benchmark your own application instead of relying on micro-benchmarks when deciding what JVM options to use.

The complete results are also available. Feel free to play with the source code and provide your own numbers, fixes and/or improvements.